i used to connect my vinyl record player to a speaker manually when i want to listen records.

it was annoying and not practical to touch wires every time, so i decided to use my headless linux server that surprisignly stay nearby as a network audio bridge and listen records through my studio PC.

this article describes how i built an automated analog-to-network pipeline using pipewire, vban, and wireplumber.

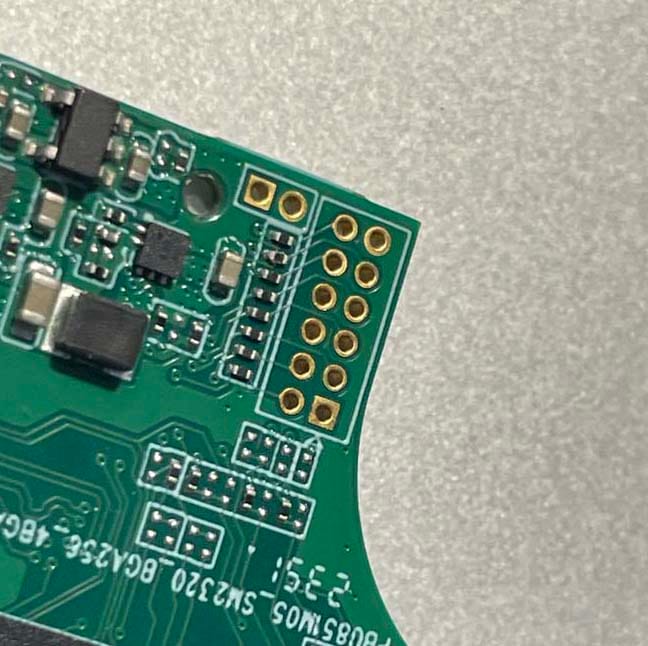

hardware setup

the physical setup was straightforward. i connected the audio output of the record player to the line-in jack on the server’s motherboard.

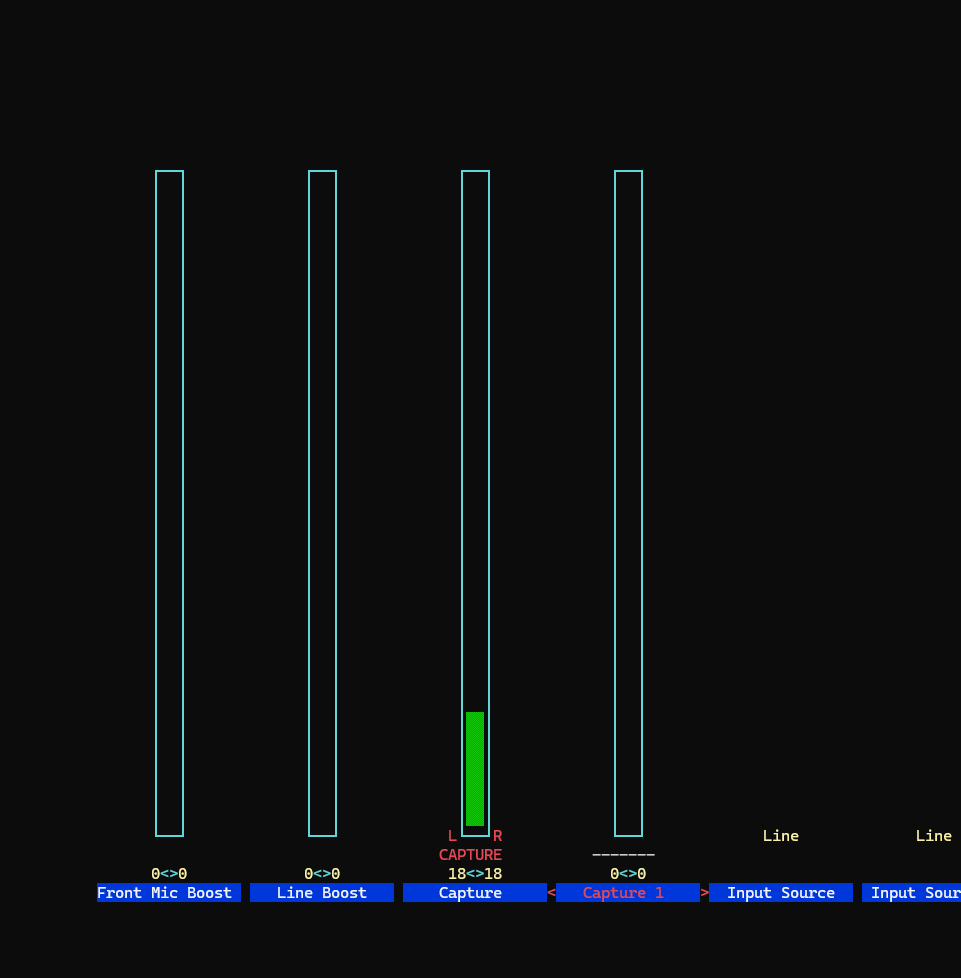

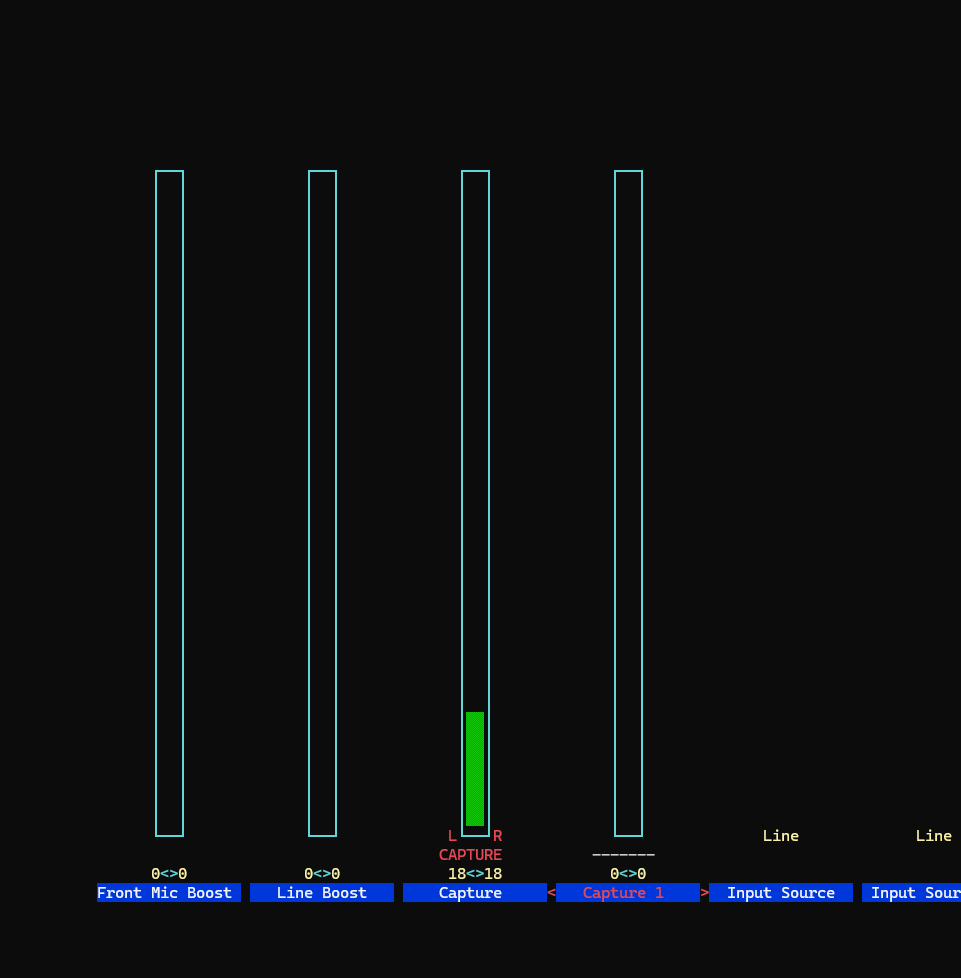

on the linux side i added my user to the audio group to allow access to the sound device and used alsamixer to unmute the capture channel and enable the line-in input.

at this point the server could capture audio from the record player.

phase 1: proof of concept

before automating the entire pipeline, i first confirmed that audio could be transmitted over the network with low latency.

for this experiment i used pipewire together with the vban network protocol.

i created the following configuration file:

~/.config/pipewire/pipewire.conf.d/vban-send.conf

this file loads the libpipewire-module-vban-send module and configures it to send audio packets to my windows pc on port 6980.

a minimal configuration looks like this:

context.modules = [

{ name = libpipewire-module-vban-send

args = {

destination.ip = "192.168.1.50"

destination.port = 6980

sess.name = "LineInStream"

sess.media = "audio"

audio.rate = 48000

audio.channels = 2

audio.format = "S16LE"

audio.position = [ FL FR ]

stream.props = {

node.name = "vban-linein-send"

node.description = "VBAN Line-In Sender"

media.class = "Audio/Sink"

}

}

}

]

this configuration creates a pipewire sink named vban-linein-send. any audio routed into this sink is transmitted as a vban stream.

to test the connection i played an audio file directly into the new sink:

pw-play --target=vban-linein-send test.wav

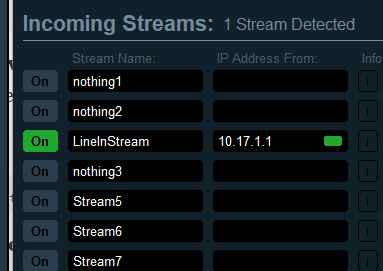

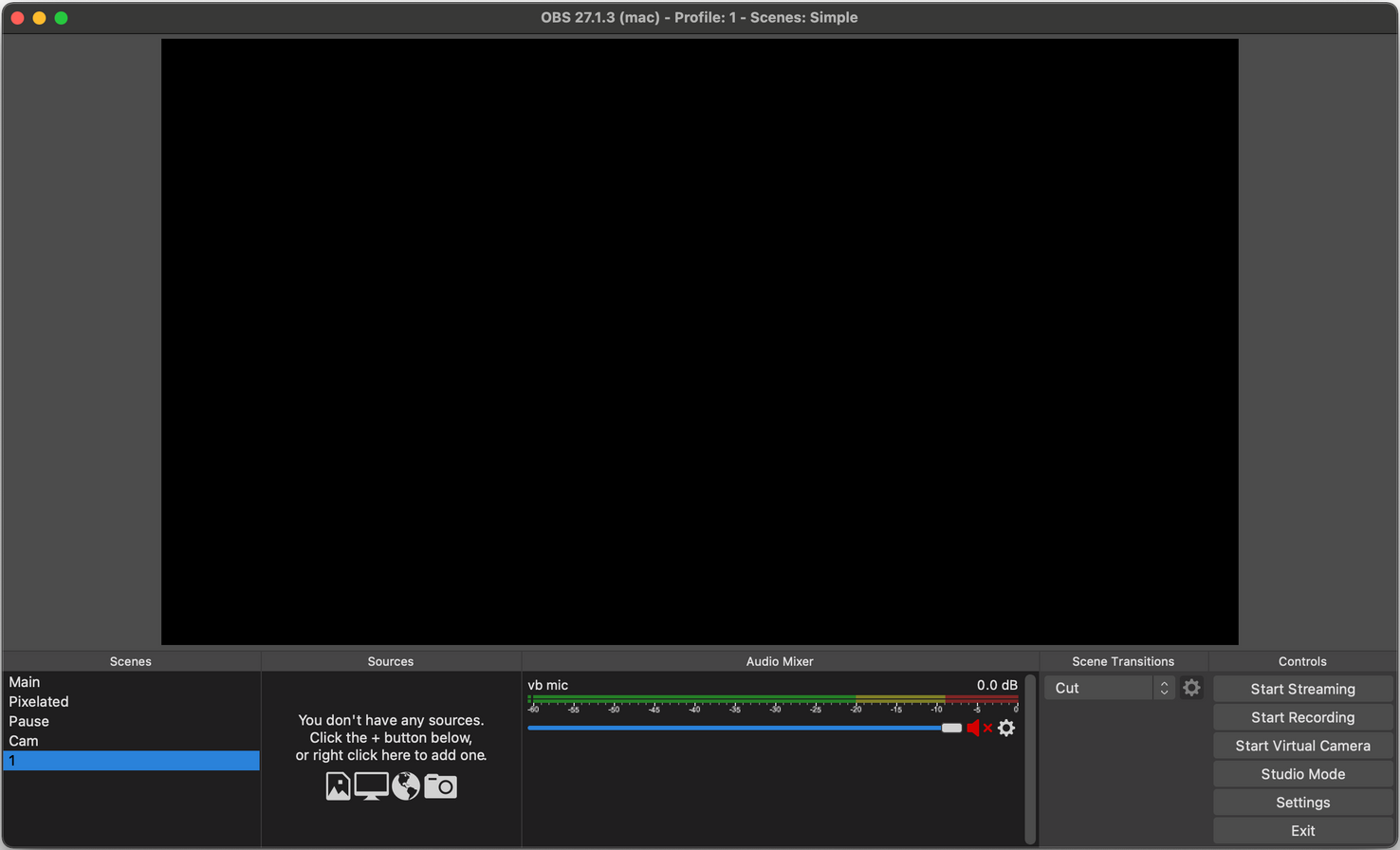

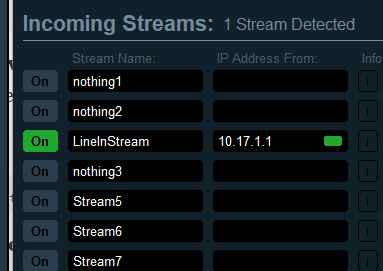

on my windows pc i opened voicemeeter banana, enabled the vban receiver, and configured it to listen for the stream named LineInStream.

the test audio file played through the speakers immediately, confirming that the network connection worked. voicemeeter project is awesome, support them.

understanding the audio graph

pipewire represents audio routing as a graph of connected nodes. once the capture device and the vban sender are active, the signal path looks like this:

vinyl player

│

▼

motherboard line-in

(alsa capture node)

│

▼

pipewire audio graph

│

▼

vban send node

(libpipewire-module-vban-send)

│

▼

udp network stream (through a home network)

│

▼

windows pc (voicemeeter vban receiver)

│

▼

hi-fi speakers

the remaining task was to connect the capture node to the vban node automatically during startup.

phase 2: creating a persistent setup

the goal was to make the system operate automatically. if server reboots, it should capture audio from the line-in port and stream it to the windows machine without manual intervention.

first, i enabled systemd lingering so that pipewire and wireplumber could run without an active login session:

loginctl enable-linger your-username

next, i wrote a wireplumber lua script using objectmanager. the script detects the alsa capture ports and links them to the vban sender ports whenever both are present in the pipewire graph.

while implementing this persistent configuration i encountered two issues.

hurdle 1: line-in gain reset

during the first full test the audio sounded distorted.

the cause was the default line-in gain configured by the motherboard codec. the capture boost was too high for the signal coming from the record player.

lowering the gain in alsamixer removed the distortion. however, after each reboot the gain returned to its original value.

this happens because modern pipewire systems are managed by wireplumber, which applies its own mixer state during startup and overrides alsa settings.

the correct approach was to configure the volume through wireplumber.

first i located the capture device:

wpctl status

then i set the capture level:

wpctl set-volume 48 0.10

wireplumber stores this setting in its state database and reapplies it automatically after each restart.

hurdle 2: the script was not loaded

after solving the volume issue, i placed my lua script in:

~/.local/share/wireplumber/scripts/vban-autolink.lua

and created a configuration fragment:

~/.config/wireplumber/wireplumber.conf.d/99-vban-autolink.conf

this fragment instructs wireplumber to load the script during startup.

after rebooting the server the audio was still silent. investigation showed that the script was not being loaded.

the reason is related to wireplumber’s configuration hierarchy. if the file wireplumber.conf does not exist in the user configuration directory, wireplumber uses the system configuration in /usr/share/wireplumber and ignores user configuration fragments.

the solution was to copy the base configuration file:

cp /usr/share/wireplumber/wireplumber.conf ~/.config/wireplumber/wireplumber.conf

once this file existed in the user configuration directory, wireplumber detected the custom fragment and loaded the lua script correctly.

final result

after resolving these issues the system operates automatically.

when the server starts:

- pipewire launches in the background

- wireplumber restores the capture volume

- the vban module initializes

- the lua script links the line-in capture ports to the vban sender

from that moment the server continuously transmits the audio signal from the record player to the windows pc in my studio. in voicemeeter i can then route the incoming vinyl stream to any output device, such as the sound bar, the studio hi-fi speakers, or headphones.